Interactive Experiences

This collection represents ongoing exploration in the boundaries between human input and digital response through the lens of TouchDesigner. By synthesizing real-time data—ranging from audio frequencies to kinetic motion and manual attractors—I engineer systems where static geometry is transformed into living, sculptural entities. Drawing upon the methodologies of leading industry educators these studies focus on the delicate balance between mathematical precision and organic, fluid aesthetics. Each project serves as a technical blueprint for immersive environments, utilizing feedback loops, vertex displacement, and GPU-accelerated particle systems to translate invisible data into a tangible, luminous experience.

Audio-reactive Bloomy Circles

In this TouchDesigner study, a rhythmic, radial particle system emerges through technical frameworks explored in The Interactive & Immersive HQ tutorials. Real-time audio analysis—processed via Audio File In and Audio Analysis CHOPs—acts as the primary driver, modulating the scale and positioning of circular instances. By smoothing these sound frequencies through a sequence of Math and Lag operators, the network generates a responsive visual pulse that dictates the dynamic expansion and contraction of the rings. To heighten the sensory impact, a Feedback loop and Edge TOP are integrated to produce a ghostly trail of motion history, while a final Bloom pass provides the luminous glow necessary to transform the raw audio data into a living geometric performance.

Mouse-Controlled Dissolution

This project is a dynamic, real-time visual experience developed in TouchDesigner. I designed an interactive 3D sphere that constantly breaks down and reforms using a highly controlled particle system. The core interaction is driven by the user's mouse position (X and Y coordinates), which directly influences the shape, movement, and visual intensity of the disintegrating sphere. To achieve the signature fluid and ethereal aesthetic, I utilized the Feedback TOP technique, enabling the continuous accumulation and blending of frames to create trailing, complex, and evolving particle effects that transform based on user input.

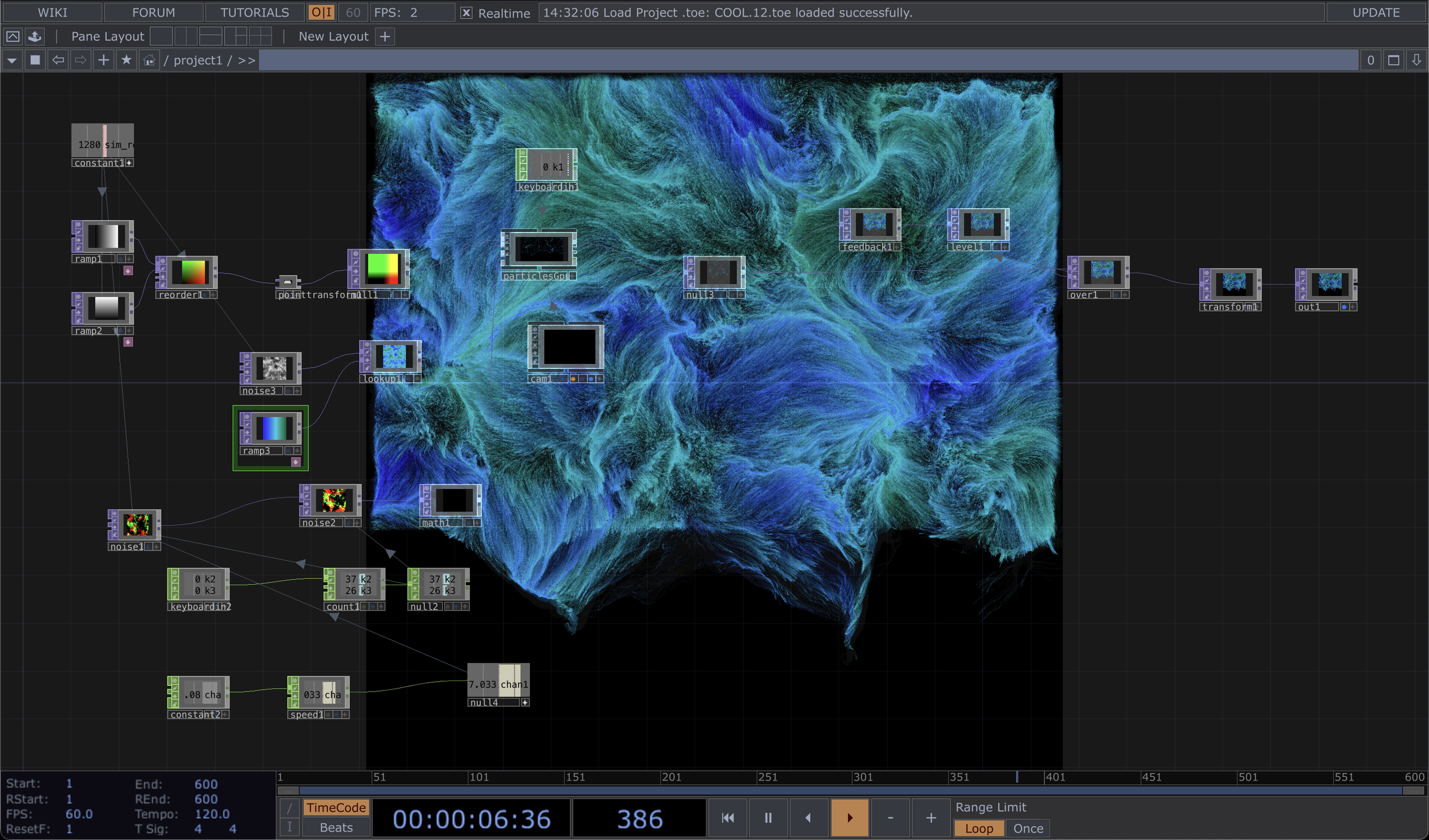

GPU Flow Field

This generative art project focused on creating complex, fluid motion and evolving shapes within TouchDesigner. I engineered a high-performance particle field using the Particles GPU framework, which was initialized as a dense grid. The dynamic movement was achieved by leveraging procedural Noise TOPs to drive the particle system’s Optical Flowinput, resulting in signature fluid, organic curves. To enhance the visual impact, I integrated a sophisticated Feedback loop to generate distinct particle trails and evolving visual paths. This project successfully demonstrates advanced particle manipulation, procedural control, and the creation of highly-detailed, real-time generative art effects.

Dancing with Kinect

Drawing from the technical frameworks shared by the reflekkt YouTube channel, I designed this TouchDesignerinstallation to function as a high-energy translation of human kinetics into generative art. Rather than simply mirroring a user, the system interprets Kinect motion data to sculpt a dense field of horizontal scanlines, creating a vibrant, distorted representation of the body's movement. This visual energy is further modulated by audio-reactive procedural Noise, which pulses through a fiery color gradient in synchronization with real-time sound frequencies. By leveraging Trail and Math CHOPs to refine these inputs, the project successfully turns fleeting gestures into a persistent, glowing narrative of light and rhythm.

Infinite Wireframe Echoes

In this TouchDesigner study, I explored how recursive feedback can breathe life into simple 3D geometry, turning a basic wireframe cube into a glowing, infinite tunnel. I developed a sophisticated Feedback TOP loop utilizing Transform and Bloom nodes to generate a striking sense of receding depth that pulls the viewer into the center of the form. The experience bridges the digital and physical worlds by pairing real-time audio analysis with Mouse In CHOP tracking, allowing sound and user movement to directly dictate the cube’s spatial orientation and pulse. By refining these inputs through Lag and Math CHOPs, I achieved a seamless, vibrant neon aesthetic, resulting in a generative environment that feels both highly responsive and organic.

Spiraling Vertex

A standard sphere is reimagined as a shifting, sculptural entity in this TouchDesigner study, which utilizes procedural frameworks shared by the Acrylicode YouTube channel. The geometry undergoes continuous deformation through real-time Noise TOP modulations and Twist SOP operations, resulting in the undulating, spiraled movement seen in the final output. To ground the object within a virtual environment, a Cone Light and Render TOP were implemented to cast dynamic shadows across a floor plane. The final aesthetic is defined by a multi-color Ramp TOP converted into instancing data, with a Bloom filter applied to emphasize the fluid, luminous complexity of the motion.

Schiaparelli

As the final project for my Interactive Content Creation course, I designed and developed an in-store installation to engage customers with the exclusive Schiaparelli jewellery line. This interactive experience was built using TouchDesigner, creating a real-time, dynamic visual narrative. While the core installation features dual-layer audio reactivity (where the Schiaparelli logo and a promotional video are precisely modulated by two distinct frequency lines of an analyzed and edited audio track), the central interactive feature is driven by Kinect sensor integration. This integration was used to allow customer movement to directly manipulate video-based particles. Furthermore, I utilized a specific model photo, applying picture particle techniques to visually break down a featured piece of jewellery into thousands of generative particles. Crucially, this setup allows customer movement to directly manipulate these detailed jewellery particles and other visual elements, making the entire display a truly immersive and responsive brand experience.

Metaball Grid Study

Centered on the interaction between a virtual attractor and a structural grid, this TouchDesigner study utilizes techniques explored through The Interactive & Immersive HQ YouTube channel. A Metaball combined with a Force SOP serves as the primary mechanism, allowing a user-controlled attractor to physically displace a field of thousands of points. Because the Particles SOP is configured to calculate repulsion in real-time, the grid reacts with organic, fluid-like ripples as the mouse. To enhance the depth of this digital environment, I applied a Phong MAT and a Bloom filter, transforming the raw coordinate data into a high-contrast, glowing topography that emphasizes the tactile nature of the simulation.